Introducing ORPHEUS: Skill-Based AI Orchestration Framework

How ORPHEUS reimagines AI orchestration and why Anthropic’s latest thinking confirms the shift is real. GitHub Repository | Core Principles

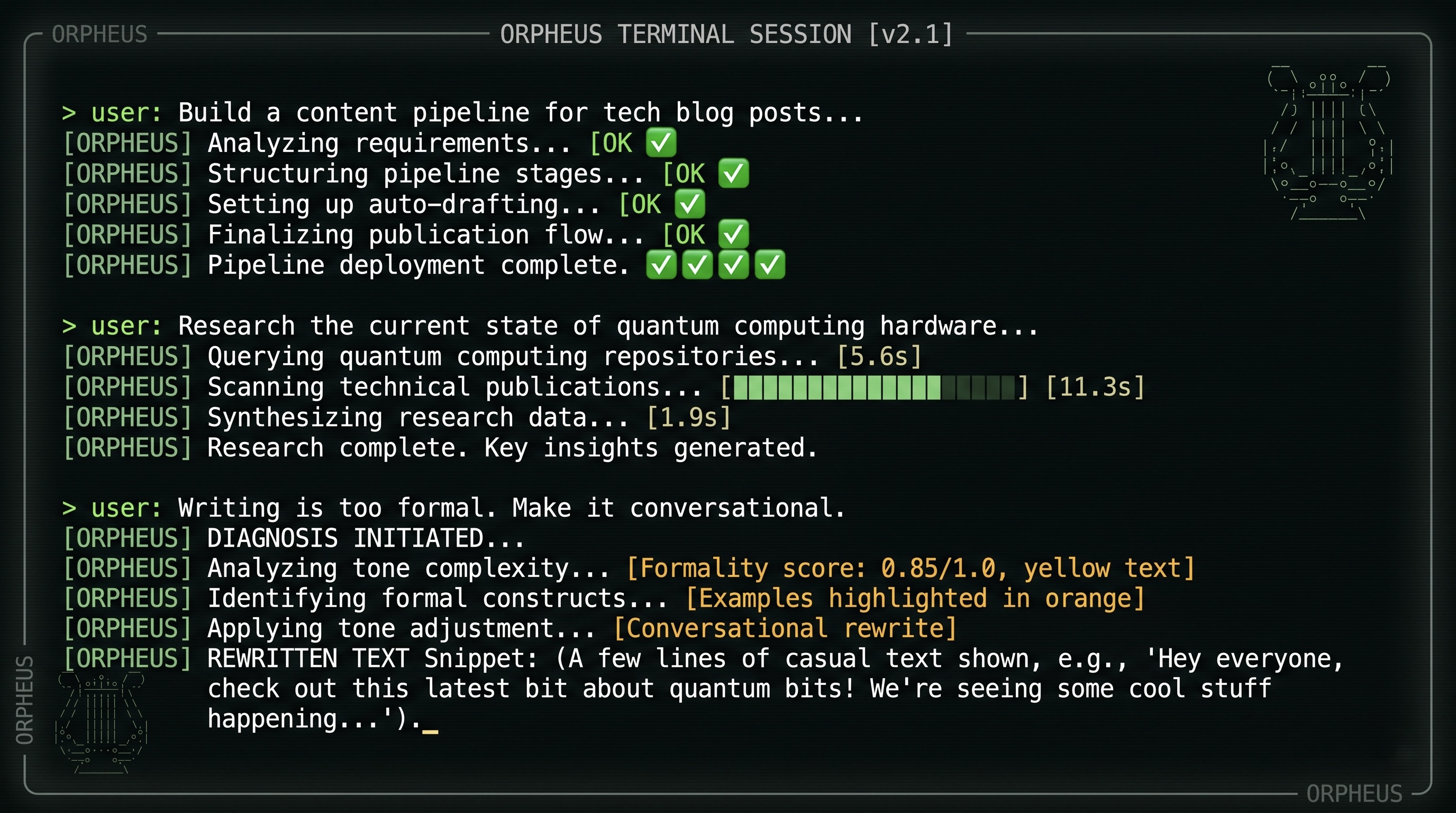

What If You Could Build This in ~5 minutes?

You: "Build me a content pipeline that researches topics,

writes articles, reviews for accuracy, and publishes"

ORPHEUS: ✓ Analyzed → 4 experts, 5 workers, sequential pipeline

✓ Generated → orchestrator, contracts, registry, scripts

✓ Validated → DAG clean, contracts compatible

✓ Ready. Run it whenever you want.

No Python. No YAML configuration. No Docker. No message queues. No deployment.

You describe what you want. ORPHEUS builds it, runs it, and manages its entire lifecycle through conversation. After testing your initial Orpheus system, you can continue building with talking!

This is ORPHEUS: Orchestrated Runtime Protocol for Hierarchical Execution Unified Skills. A framework that replaces multi-agent systems with multi-skill systems, where the only infrastructure you need is the coding agent you’re already using.

The Problem ORPHEUS Solves

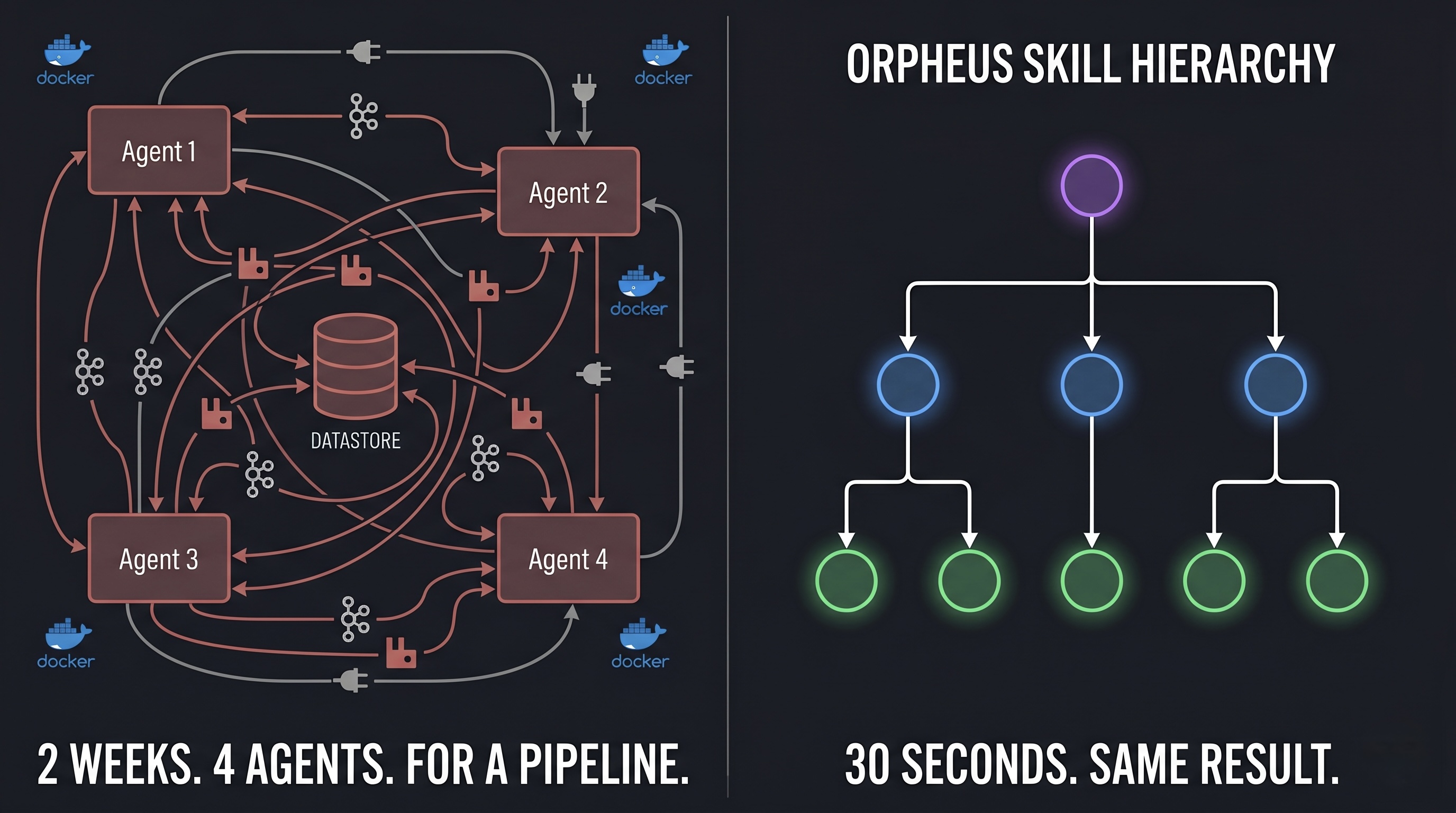

Since the agents came into AI area, I’ve been playing with multi-agent systems. Four agents. One orchestrator. A message queue between them. A state database. Docker containers. An observability stack. Two weeks of wiring.

The system’s job? Research a topic, write a summary, review it, and save a file.

Four agents. For what was essentially a pipeline.

I looked at the architecture diagram and realized something uncomfortable: most of these “agents” were just a prompt and a tool list wearing a trench coat. They didn’t need their own LLM instance. They didn’t need inter-process communication. They didn’t need independent deployment. They needed a clear role definition and a way to be invoked when needed.

That realization became ORPHEUS.

The Core Idea: Skills Replace Agents

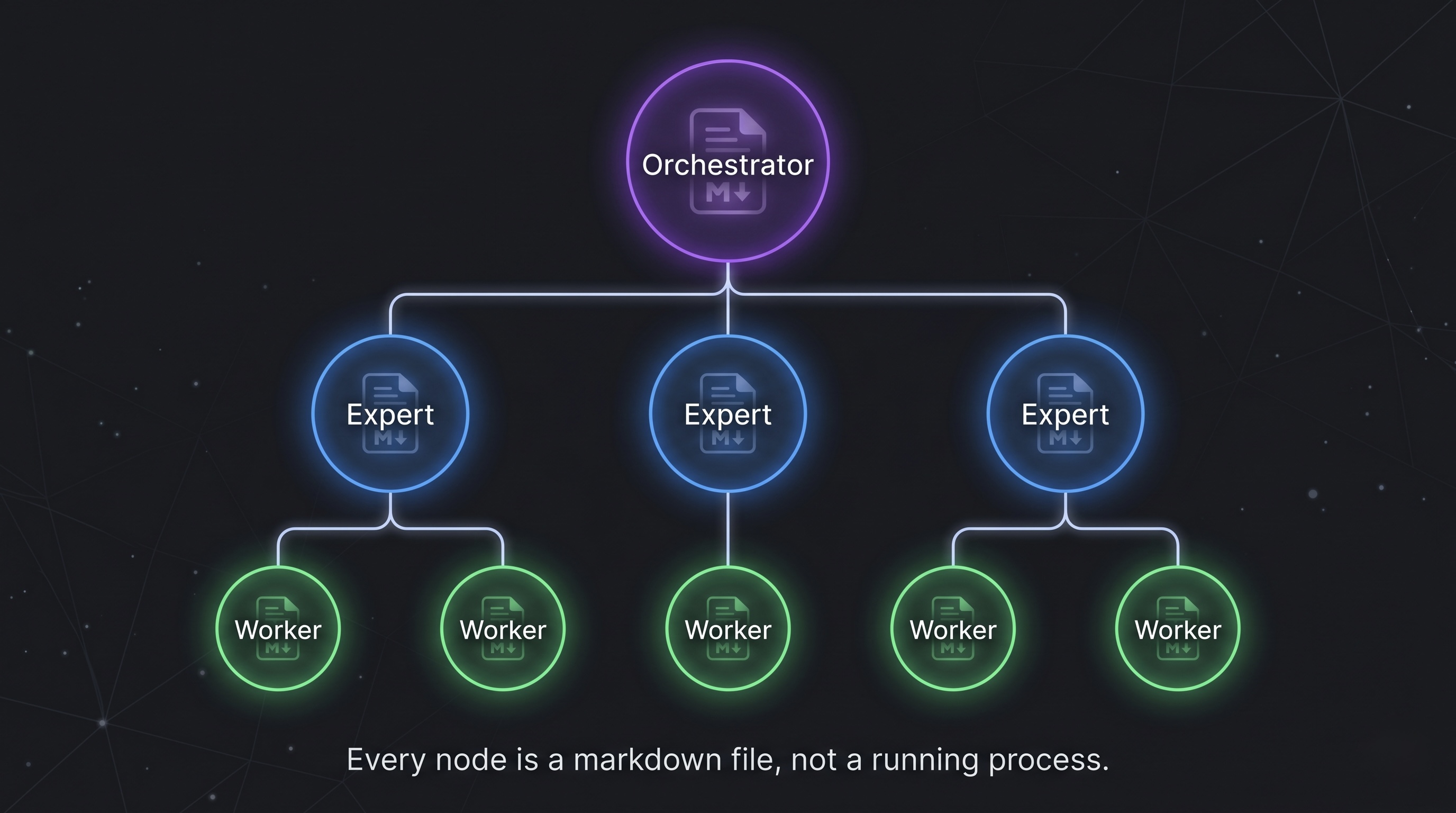

ORPHEUS introduces a skill hierarchy, three composable primitives that handle orchestration without the agent overhead:

Orchestrator Skill - decomposes requests, manages execution flow

│

├── Expert Skill - domain specialist, owns a job type

│ ├── Worker Skill - atomic task executor

│ └── Worker Skill

│

└── Expert Skill

└── Worker Skill

An Orchestrator Skill reads your request, breaks it into jobs, identifies which can run in parallel, and dispatches them. An Expert Skill owns a job type and it decides whether to handle it directly or delegate to Worker Skills for atomic operations like web search, data extraction, or formatting.

The critical difference from multi-agent systems: none of these are separate processes. They’re structured natural language definitions inside the markdown files that the coding agent loads and executes. Your coding agent already provides LLM reasoning, tool access, web search, code execution, and subagent spawning. Skills simply structure how those existing capabilities are used.

| Multi-Agent | ORPHEUS | |

|---|---|---|

| Runtime | N LLM instances | 1 coding agent |

| Communication | HTTP/gRPC/queues | Filesystem |

| Deployment | N services | 1 folder |

| Configuration | Python/YAML code | Natural language |

| New capability | Deploy a service | Write a markdown file |

| Dependencies | pip/npm/docker | None |

ORPHEUS Manages Its Own Lifecycle

Here’s where it gets interesting. ORPHEUS doesn’t just build systems, it manages their entire lifecycle. And it does this using the same skill patterns it creates for users.

The ORPHEUS meta-system has four experts:

| Expert | What It Does | You Say |

|---|---|---|

| Builder | Creates new skill systems from natural language | “Build me a system that…” |

| Doctor | Diagnoses failures, traces root cause, applies fixes | “Why did the research step fail?” |

| Auditor | Runs 7 health checks, produces score and recommendations | “Validate my system” |

| Surgeon | Structural modifications with cascading effect analysis | “Add a fact-checker after research” |

The Builder generates your system. The Doctor fixes it when something breaks. The Auditor validates it before you run it. The Surgeon evolves it when requirements change. All through natural language. All in the same conversation.

You: "The writing quality has been too formal lately"

ORPHEUS: 🩺 Doctor: Read execution logs, traced to writing-expert

instructions. Tone guidance was "comprehensive and thorough"

which the model interprets as formal.

✅ Fix applied: Changed to "clear and conversational while

maintaining accuracy."

➡️ Re-run the pipeline to verify.

You: "Add a fact-checking expert between research and writing"

ORPHEUS: 🔧 Surgeon: Analyzed dependency chain.

+ Created fact-check-expert with contract

~ Updated orchestrator routing

~ Updated registry

✅ Validated: DAG clean, contracts compatible

No code. No config files. No restarts. The system evolves through conversation.

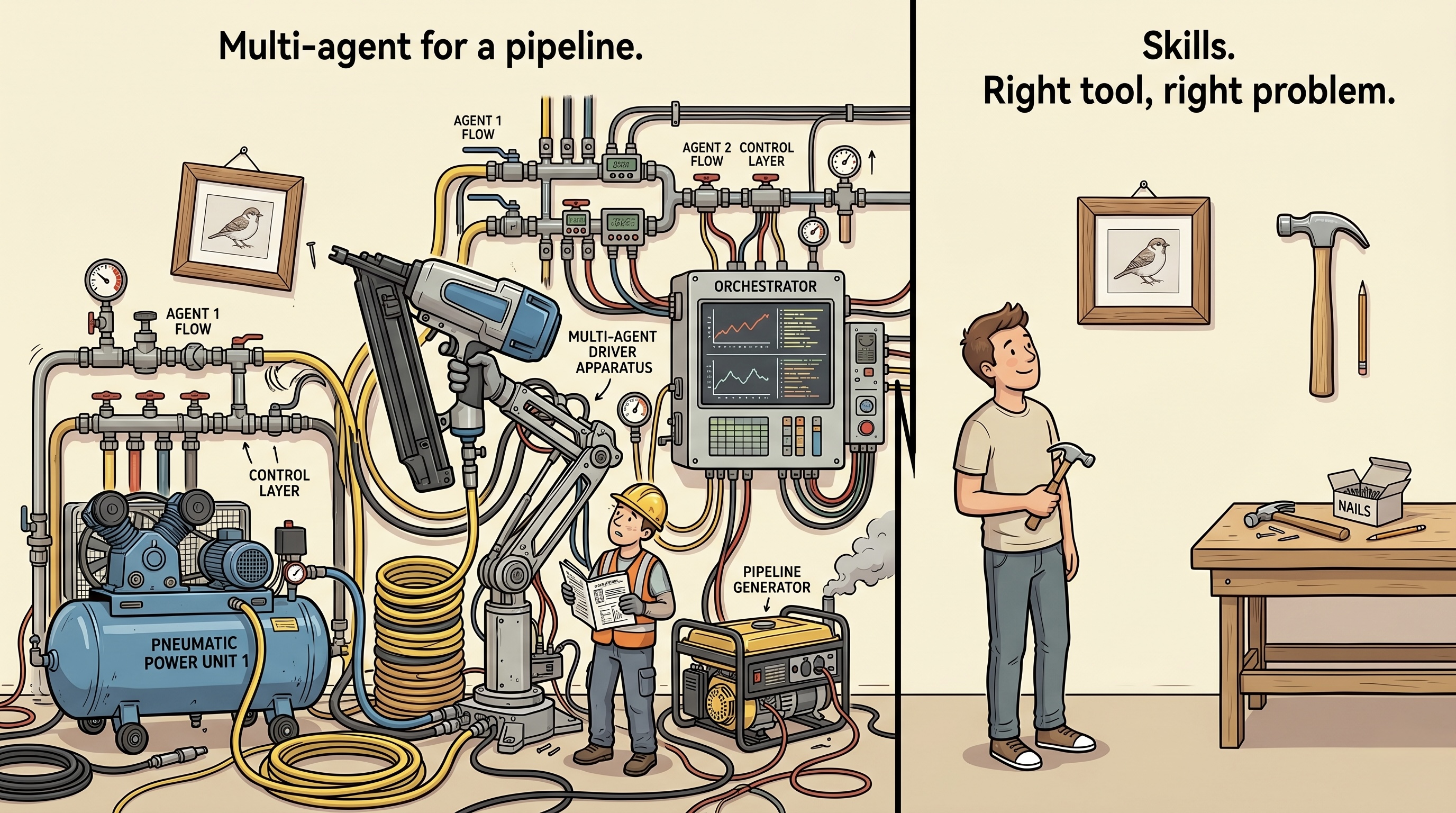

Why This Matters: The Right Tool for the Right Problem

When you need to hang a picture, you reach for a nail and a tap hammer. You don’t bring in a pneumatic framing nailer, an air compressor, and a generator.

The pneumatic nailer is a better tool for framing a house. But for hanging a picture, it’s overhead that adds complexity without adding value.

Multi-agent systems are the pneumatic nailer, powerful and necessary for:

- Long-running autonomous systems with persistent independent state

- Pipelines mixing different LLM providers across different machines

- Systems requiring hard process isolation for fault tolerance

But most AI orchestration tasks are picture-hanging, not house-framing. Most “agents” in production need a clear role definition, not their own infrastructure.

Skills are the tap hammer that was missing from the toolbox. ORPHEUS provides it.

Anthropic Confirms the Direction

In a recent presentation, Barry Zhang and Mahesh Murag from Anthropic described exactly this paradigm shift, independently arriving at the same conclusion.

Watch: Building Effective Agents with Skills - Anthropic (YouTube)

Their argument: stop building monolithic agents. Build modular skills instead. Their key insight:

Modern models are highly intelligent, but they often lack the specific domain expertise needed for complex real-world tasks. Current agents frequently struggle to retain learned knowledge or absorb user expertise effectively.

Their proposed architecture: an agent loop for context management, MCP servers for external connectivity, and a library of skills for domain expertise. Skills as portable, organized collections of files that package procedural knowledge. Progressive disclosure to manage context windows. Non-technical users extending agent capabilities without code.

ORPHEUS aligns with this vision and extends it in three specific ways:

1. Skills that orchestrate skills. Anthropic describes skills as knowledge packages loaded by an agent. ORPHEUS structures skills into a hierarchy where an Orchestrator Skill dispatches Expert Skills that delegate to Worker Skills, bringing the orchestration pattern itself into the skill layer.

2. A self-managing meta-system. Anthropic envisions agents creating their own skills from past successes. ORPHEUS implements this today that the Builder creates systems, the Doctor fixes them, the Surgeon evolves them, all as skills operating on skills.

3. Self-contained generated systems. When ORPHEUS builds a system, the output is a .orpheus/ folder that contains everything: orchestrator, experts, workers, contracts, scripts. That folder doesn’t need ORPHEUS to run. It’s like a compiled binary: the compiler creates it, but isn’t needed to execute it.

Barry Zhang drew an analogy to computing history: just as operating systems made processors valuable by orchestrating resources, agent runtimes and skills will open a layer where developers encode domain-specific expertise. ORPHEUS is building that layer today.

What Makes ORPHEUS Different

Beyond the skill-based paradigm, ORPHEUS introduces capabilities that don’t exist in other frameworks:

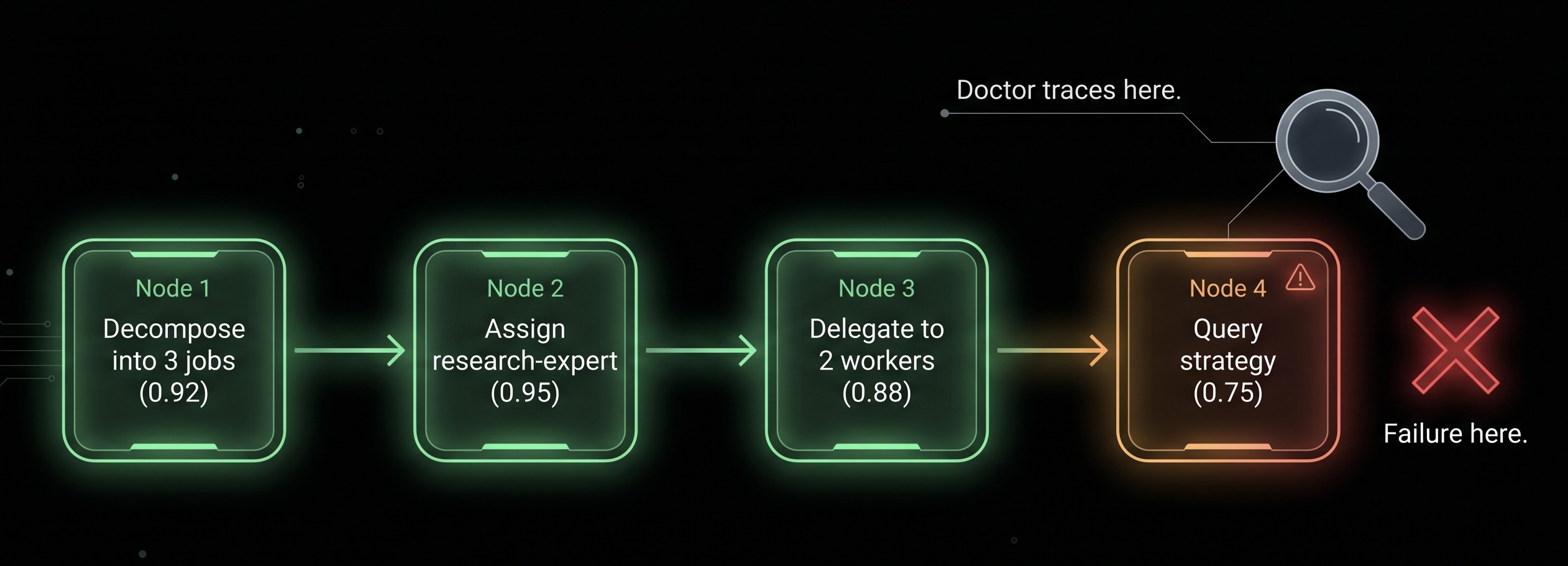

Every Decision Explains Itself

Most systems log what happened. ORPHEUS logs why.

decision:

question: "Should this job be delegated to workers?"

options_considered:

- "direct execution"

- "delegate to 2 parallel workers"

chosen: "delegate to 2 parallel workers"

reasoning: "Topic is broad, requiring searches across multiple

sub-domains. Parallel workers cover more ground faster."

confidence: 0.88

Every decision from job decomposition to worker selection to query strategy is logged with what was decided, what alternatives existed, and why. When the Doctor diagnoses a failure, it reads these decision trails to identify whether the reasoning was flawed (fix the instructions) or the data was bad (fix the input). The distinction changes the fix entirely.

Errors Never Lose Their Root Cause

In a 3-level system, error messages typically get summarized at each level until the user sees “execution failed” with no useful detail. ORPHEUS requires every level to wrap, not replace, the error from below:

error:

level: orchestrator

message: "Job 'analysis' failed after 2 retries"

original_error:

level: expert

message: "Data lookup failed - worker returned empty"

original_error:

level: worker

message: "WebSearch returned 0 results for query"

The full chain from root cause to symptom is always preserved. The Doctor and you always see what actually went wrong.

Natural Language All the Way Down

Every skill in ORPHEUS is a markdown file. Routing rules are markdown tables. Execution protocols are numbered phases. Worker tasks are checklists. Contracts are YAML schemas.

This means anyone who can write clear instructions can build an orchestrated AI system. Anthropic highlighted the same potential in their presentation, skills enabling people in finance, recruiting, and legal to extend agent capabilities without programming knowledge. ORPHEUS makes that real today.

Zero Dependencies, Fully Portable

Install ORPHEUS by copying a folder. Generated systems are self-contained that they carry their own runtime scripts. No packages, no services, no infrastructure. The coding agent is the only runtime.

Put a generated .orpheus/ system in a Git repo. Share it with a colleague. Deploy it on a different machine. It works everywhere the coding agent works.

See It In Action

The complete ORPHEUS experience in three interactions:

Build:

You: "Build a content pipeline: research, write, review, publish"

ORPHEUS: ✓ 4 experts, 5 workers, validated, ready.

Run:

You: "Research quantum computing and publish an article"

ORPHEUS: ⚡ research ✓ 15s → write ✓ 20s → review ✓ 10s → publish ✓ 8s

Article published.

Fix:

You: "The writing has been too formal"

ORPHEUS: 🩺 Traced to instruction phrasing. Fixed. Re-run to verify.

Three prompts. Zero code. Working pipeline that evolves through conversation.

The Bigger Picture

The shift from agents to skills isn’t just about simplifying architecture. It’s about democratization.

Before operating system abstractions, you needed to be an electrical engineer to use a computer. After, anyone could run software. Before high-level languages, you needed to think in machine code. After, you could express intent in something closer to human thought.

The same shift is happening in AI orchestration. Multi-agent frameworks require Python/TypeScript expertise, infrastructure knowledge, and distributed systems experience. Skill-based orchestration requires the ability to describe what you want.

This doesn’t mean multi-agent systems disappear, just as assembly language didn’t vanish when high-level languages arrived. Complex, long-running, multi-model systems will continue to need full agent architectures. But the vast middle ground that the majority of orchestration that’s really just coordinated LLM calls and gets dramatically simpler.

The question isn’t “agents or skills?” It’s “which problems need agents, and which just need the right skill?”

ORPHEUS exists to make that second option real. It’s open source, zero-dependency, and available today.

Try ORPHEUS

Install in 30 seconds and create your automation in a few minutes:

git clone https://github.com/nuryslyrt/ORPHEUS.git

cp -r ORPHEUS/skill/ ~/.claude/skills/orpheus/

chmod +x ~/.claude/skills/orpheus/scripts/*

Then open Claude Code and describe the system you want to build.

| GitHub Repository | Core Principles |

ORPHEUS is licensed under AGPL v3.